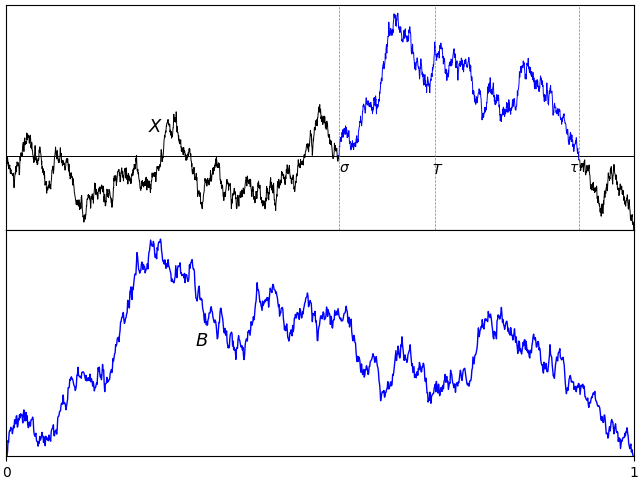

A normalized Brownian excursion is a nonnegative real-valued process with time ranging over the unit interval, and is equal to zero at the start and end time points. It can be constructed from a standard Brownian motion by conditioning on being nonnegative and equal to zero at the end time. We do have to be careful with this definition, since it involves conditioning on a zero probability event. Alternatively, as the name suggests, Brownian excursions can be understood as the excursions of a Brownian motion X away from zero. By continuity, the set of times at which X is nonzero will be open and, hence, can be written as the union of a collection of disjoint (and stochastic) intervals (σ, τ).

In fact, Brownian motion can be reconstructed by simply joining all of its excursions back together. These are independent processes and identically distributed up to scaling. Because of this, understanding the Brownian excursion process can be very useful in the study of Brownian motion. However, there will by infinitely many excursions over finite time periods, so the procedure of joining them together requires some work. This falls under the umbrella of ‘excursion theory’, which is outside the scope of the current post. Here, I will concentrate on the properties of individual excursions.

In order to select a single interval, start by fixing a time T > 0. As XT is almost surely nonzero, T will be contained inside one such interval (σ, τ). Explicitly,

| (1) |

so that σ < T < τ < ∞ almost surely. The path of X across such an interval is t ↦ Xσ + t for time t in the range [0, τ - σ]. As it can be either nonnegative or nonpositive, we restrict to the nonnegative case by taking the absolute value. By invariance, S-1/2XtS is also a standard Brownian motion, for each fixed S > 0. Using a stochastic factor S = τ – σ, the width of the excursion is normalised to obtain a continuous process {Bt}t ∈ [0, 1] given by

| (2) |

By construction, this is strictly positive over 0 < t < 1 and equal to zero at the endpoints t ∈ {0, 1}.

The only remaining ambiguity is in the choice of the fixed time T.

Lemma 1 The distribution of B defined by (2) does not depend on the choice of the time T > 0.

Proof: This follows from scaling invariance of Brownian motion. Consider any other fixed positive time T̃, and use the construction above with T̃, σ̃, τ̃, B̃ in place of T, σ, τ, B respectively. We need to show that B̃ and B have the same distribution. Using the scaling factor S = T̃/T, then X′t = S-1/2XtS is a standard Brownian motion. Also, σ′= σ̃/S and τ′= τ̃/S are random times given in the same way as σ and τ, but with the Brownian motion X′ in place of X in (1). So,

has the same distribution as B. ⬜

This leads to the definition used here for Brownian excursions.

Definition 2 A continuous process {Bt}t ∈ [0, 1] is a Brownian excursion if and only it has the same distribution as (2) for a standard Brownian motion X and time T > 0.

In fact, there are various alternative — but equivalent — ways in which Brownian excursions can be defined and constructed.

- As a normalized excursion away from zero of a Brownian motion. This is definition 2.

- As a normalized excursion away from zero of a Brownian bridge. This is theorem 6.

- As a Brownian bridge conditioned on being nonnegative. See theorem 9 below.

- As the sample path of a Brownian bridge, translated so that it has minimum value zero at time 0. This is a very interesting and useful method of directly computing excursion sample paths from those of a Brownian bridge. See theorem 12 below, sometimes known as the Vervaat transform.

- As a Markov process with specified transition probabilities. See theorem 15 below.

- As a transformation of Bessel process paths, see theorem 16 below.

- As a Bessel bridge of order 3. This can be represented either as a Bessel process conditioned on hitting zero at time 1., or as the vector norm of a 3-dimensional Brownian bridge. See lemma 17 below.

- As a solution to a stochastic differential equation. See theorem 18 below.