In stochastic calculus it is common to work with processes adapted to a filtered probability space . As with probability space extensions, It can sometimes be necessary to enlarge the underlying space to introduce additional events and processes. For example, many diffusions and local martingales can be expressed as an integral with respect to Brownian motion but, sometimes, it may be necessary to enlarge the space to make sure that it includes a Brownian motion to work with. Also, in the theory of stochastic differential equations, finding solutions can sometimes require enlarging the space.

Extending a probability space is a relatively straightforward concept, which I covered in an earlier post. Extending a filtered probability space is the same, except that it also involves enlarging the filtration . It is important to do this in a way which does not destroy properties of existing processes, such as their distributions conditional on the filtration at each time.

Let’s consider a filtered probability space . An enlargement

is, firstly, an extension of the probability spaces. It is a map from Ω′ to Ω measurable with respect to and

, and preserving probabilities. So ℙ′(π-1E) = π(E) for all

. In addition, it is required to be

measurable for each time t ≥ 0, meaning that

for all

. Consequently, any adapted process Xt lifts to an adapted process X∗t = π∗Xt on the larger space, defined by X∗t(ω) = Xt(π(ω)).

As with extensions of probability spaces, this can be considered in two steps. First, we extend to the filtered probability space on Ω′ with induced sigma-algebra consisting of sets π-1E for

, and to the filtration

. This is essentially a no-op, since events and random variables on the original filtered probability space are in one-to-one correspondence with those on the enlarged space, up to zero probability events. Next, the sigma-algebras are enlarged to

and

. This is where new random events are added to the event space and filtration.

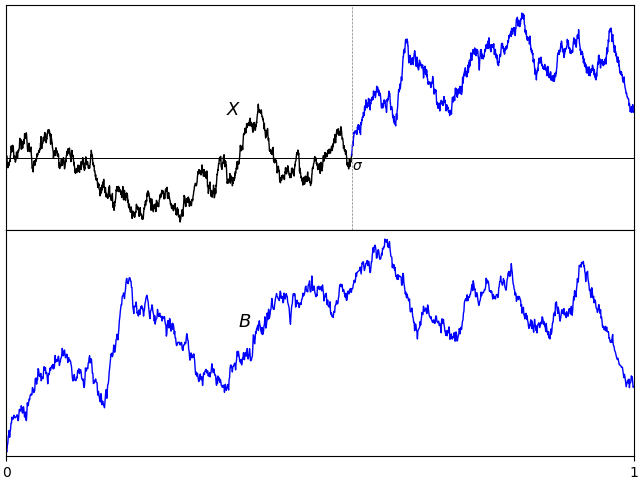

Such arbitrary extensions are too general for many uses in stochastic calculus where we merely want to add in some additional source of randomness. Consider, for example, a standard Brownian motion B defined on the original space so that, for any times s < t, Bt – Bs is normal and independent of . Does it necessarily lift to a Brownian motion on the enlarged space? The answer to this is no! It need not be the case that B∗t – B∗s is independent of

. For an extreme case, consider the situation where

and π is the identity, so there is no enlargement of the sample space. If the filtration is is extended to the maximum,

, consider what happens to our Brownian motion. The increment Bt – Bs is

-measurable, so is not independent of it. In fact, conditioned on

, the entire path of B is deterministic. It is definitely not a Brownian motion with respect to this new filtration. Similarly, martingales, submartingales and supermartingales will not remain as such if we pass to this enlarged filtration.

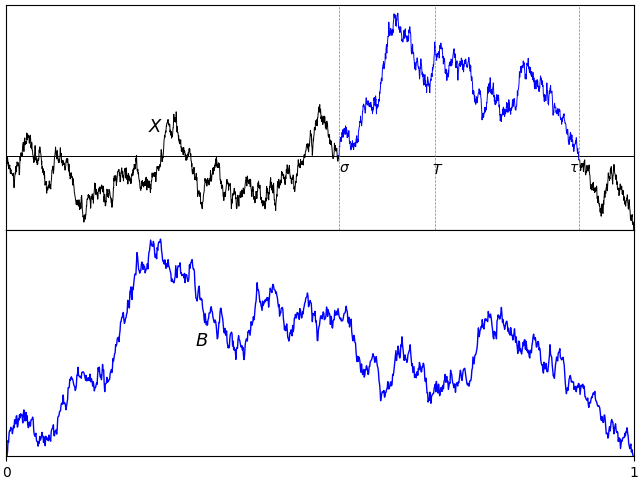

The idea is that, if for random variables X, Y defined on our original probability space, then this relation should continue to hold in the extension. It is required that

. This is exactly relative independence of

and

over

.

Recall that two sigma-algebras and

are relatively independent over a third

if

for all and

. The following properties are each equivalent to this definition;

for all bounded

-measurable random variables X and

-measurable Y.

for all bounded

-measurable X.

for all bounded

-measurable X.

This leads us to the idea of a standard extension of filtered probability spaces.

Definition 1 An extension of filtered probability spaces

is standard if, for each time t ≥ 0, the sigma-algebras

and

are relatively independent over

.