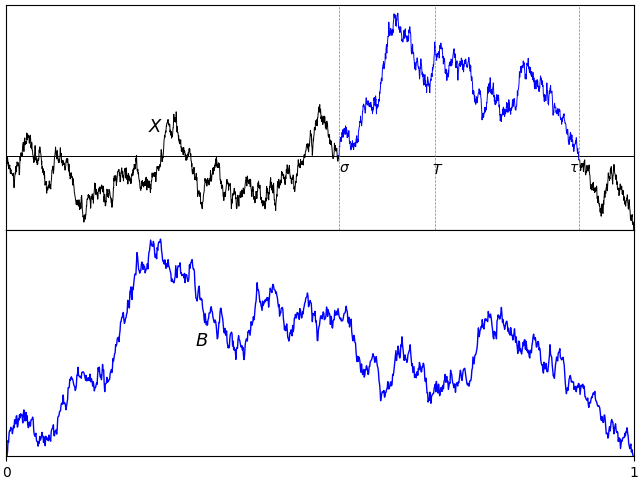

A normalized Brownian excursion is a nonnegative real-valued process with time ranging over the unit interval, and is equal to zero at the start and end time points. It can be constructed from a standard Brownian motion by conditioning on being nonnegative and equal to zero at the end time. We do have to be careful with this definition, since it involves conditioning on a zero probability event. Alternatively, as the name suggests, Brownian excursions can be understood as the excursions of a Brownian motion X away from zero. By continuity, the set of times at which X is nonzero will be open and, hence, can be written as the union of a collection of disjoint (and stochastic) intervals (σ, τ).

In fact, Brownian motion can be reconstructed by simply joining all of its excursions back together. These are independent processes and identically distributed up to scaling. Because of this, understanding the Brownian excursion process can be very useful in the study of Brownian motion. However, there will by infinitely many excursions over finite time periods, so the procedure of joining them together requires some work. This falls under the umbrella of ‘excursion theory’, which is outside the scope of the current post. Here, I will concentrate on the properties of individual excursions.

In order to select a single interval, start by fixing a time T > 0. As XT is almost surely nonzero, T will be contained inside one such interval (σ, τ). Explicitly,

| (1) |

so that σ < T < τ < ∞ almost surely. The path of X across such an interval is t ↦ Xσ + t for time t in the range [0, τ - σ]. As it can be either nonnegative or nonpositive, we restrict to the nonnegative case by taking the absolute value. By invariance, S-1/2XtS is also a standard Brownian motion, for each fixed S > 0. Using a stochastic factor S = τ – σ, the width of the excursion is normalised to obtain a continuous process {Bt}t ∈ [0, 1] given by

| (2) |

By construction, this is strictly positive over 0 < t < 1 and equal to zero at the endpoints t ∈ {0, 1}.

The only remaining ambiguity is in the choice of the fixed time T.

Lemma 1 The distribution of B defined by (2) does not depend on the choice of the time T > 0.

Proof: This follows from scaling invariance of Brownian motion. Consider any other fixed positive time T̃, and use the construction above with T̃, σ̃, τ̃, B̃ in place of T, σ, τ, B respectively. We need to show that B̃ and B have the same distribution. Using the scaling factor S = T̃/T, then X′t = S-1/2XtS is a standard Brownian motion. Also, σ′= σ̃/S and τ′= τ̃/S are random times given in the same way as σ and τ, but with the Brownian motion X′ in place of X in (1). So,

has the same distribution as B. ⬜

This leads to the definition used here for Brownian excursions.

Definition 2 A continuous process {Bt}t ∈ [0, 1] is a Brownian excursion if and only it has the same distribution as (2) for a standard Brownian motion X and time T > 0.

In fact, there are various alternative — but equivalent — ways in which Brownian excursions can be defined and constructed.

- As a normalized excursion away from zero of a Brownian motion. This is definition 2.

- As a normalized excursion away from zero of a Brownian bridge. This is theorem 6.

- As a Brownian bridge conditioned on being nonnegative. See theorem 9 below.

- As the sample path of a Brownian bridge, translated so that it has minimum value zero at time 0. This is a very interesting and useful method of directly computing excursion sample paths from those of a Brownian bridge. See theorem 12 below, sometimes known as the Vervaat transform.

- As a Markov process with specified transition probabilities. See theorem 15 below.

- As a transformation of Bessel process paths, see theorem 16 below.

- As a Bessel bridge of order 3. This can be represented either as a Bessel process conditioned on hitting zero at time 1., or as the vector norm of a 3-dimensional Brownian bridge. See lemma 17 below.

- As a solution to a stochastic differential equation. See theorem 18 below.

An important property of definition (2) is that the normalized excursion is independent of the times σ and τ. Hence, if we wanted to construct a non-normalized excursion on the random interval [σ, τ], we can start with normalized version and, independently, construct the times σ, τ.

Lemma 3 For a standard Brownian motion X and fixed positive time T, the Brownian excursion {Bt}t ∈ [0, 1] defined by (2) is independent of the random times σ, τ.

Proof: Start by choosing a fixed time 0 < S < T and consider the stopping time

Then, υ ≤ T if and only if σ ≥ S and, by the strong Markov property, Xυ + t is a standard Brownian motion on this event, independent of υ. By scaling invariance,

is also a standard Brownian motion conditioned on σ ≥ S. Furthermore, the excursion of Y about time T is equal to B so, conditioned on σ ≥ S, B still has the distribution of a Brownian excursion. Hence, B is independent of the event {σ ≥ S} and, therefore, is independent of {σ < S}.

Next, choose times 0 < S < T < U and let

Then, σ̃ < S if and only if X does not hit zero in the interval [S, U], which is equivalent to σ < S and τ > U and, on this event, the excursion B defined about time T coincides with that defined about U. Furthermore, by the argument above, the Brownian excursion defined about the time U is independent of this event, showing that B is independent of the event {σ < S, τ > U}. Finally, all events which are measurable in terms of σ and τ are independent of B, by the pi-system lemma. ⬜

The independence result can be taken further to decompose the Brownian motion into independent components. The term Rademacher distribution describes a random variable which takes the values ±1, each with probability 1/2.

Theorem 4 Let X be a standard Brownian motion, T > 0 be a fixed time and σ < T < τ be as defined by (1). Then, the following collections of random variables are all independent of each other,

- the process {Bt}t ∈ [0, 1] defined by (2), which is a Brownian excursion.

- the random times {σ, τ}.

- sgn(XT), which has the Rademacher distribution.

- the process σ-1/2Xtσ over 0 ≤ t ≤ 1, which is a standard Brownian bridge.

- the process Xτ + t over t ≥ 0, which is a standard Brownian motion.

Proof: By the strong Markov property, Xτ + t is a standard Brownian motion independent of ℱτ. Since the other collections are ℱτ-measurable, this shows that Xτ + t is independent of all of them.

Next, by lemma 15 of the post on Brownian bridges, Yt ≡ σ-1/2Xtσ is a Brownian bridge independent of 1[σ, ∞)X and, since the other collections are measurable functions of this, Y is independent of all of them.

It only remains to show that the first three collections listed are independent. As the distribution of standard Brownian motion is unchanged under flipping the sign, X → –X, and this transformation does not affect the excursion or the times {σ, τ} but flips the sign of XT, the sign of XT must have the Rademacher distribution independently of the first two collections. Finally, we just need to show that the first two collections are independent, but this is stated by lemma 3 above. ⬜

All of the terms in the decomposition of theorem 4 have already-known distributions except for the excursion, which is the subject of this post, and the random times σ, τ. For completeness, we can compute the distribution of these times, which is given by a version of the arcsine law.

Lemma 5 Let X be a standard Brownian motion, T > 0 be a fixed time and σ < T < τ be as defined by (1). Then,

for all times 0 ≤ s ≤ T ≤ t.

Proof: The event in question is equivalent to X being nonzero on the interval [s, t] or, equivalently, that the stopping time υ ≡ inf{u ≥ s: Xu = 0} is greater than t. By the strong Markov property, conditioned on υ ≤ t, Xt has a symmetric distribution, so has equal chance of being greater than 0 as being less than 0. So, it has exactly the same probability of being the same sign as Xs as having the opposite sign. This is known as the reflection principle and gives

Hence, for independent standard normals U, V, we obtain

where θ is the angle between 0 and π/2 such that sinθ = √s/t and cosθ = √1 - s/t. By symmetry, the angle between the x-axis and (U, V) is uniformly distributed over [0, 2π], and the condition that Ucosθ > Vsinθ > 0 says that it lies between 0 and π/2 – θ. Hence,

giving

as required. ⬜

The fact that the excursion B is independent of (σ, τ) and of the Brownian motion outside of this interval means that if we construct the excursions about a set of distinct times then, conditional on their intervals being disjoint, the excursions are independent. This is the situation in figure 1 above where several excursions are plotted for the same Brownian motion path. We could take this idea to its logical conclusion and consider the set of all excursions of a Brownian motion sample path. These will be independent of each other and of the zero-set of the original path, so the Brownian motion can be reconstructed by ‘filling in the gaps’ of the set of times where it is zero. This is best understood as part of excursion theory, so I will not go deeply into it here.

Since the original Brownian motion sample path can be reconstructed from the collections listed, theorem 4 provides a useful alternative construction of the Brownian motion from several independent components.

Brownian bridge excursions

Rather than starting with Brownian motion, we can equally well construct excursions from the sample paths of a Brownian bridge. This works in exactly the same way — compare figures 2 and 3.

Theorem 4 also follows across to this case in a straightforward fashion.

Theorem 6 Let 0 < T < 1 be a fixed time, X be a standard Brownian bridge, and σ < T < τ be as defined by (1). Then the process {Bt}t ∈ [0, 1] defined by (2),

is a Brownian excursion. More precisely, the following collections of random variables are all independent of each other,

- the Brownian excursion B defined by (2).

- the random times {σ, τ}.

- sgn(XT), which has the Rademacher distribution.

- the process σ-1/2Xtσ over 0 ≤ t ≤ 1, which is a standard Brownian bridge.

- the process (1 - τ)-1/2Xτ + t(1 - τ) over 0 ≤ t ≤ 1, which is a standard Brownian bridge.

Proof: Let us start with the case where X is a Brownian motion, so that theorem 4 can be applied. Then, the first 4 collections of random variables in the statement of the theorem are independent with stated distributions and, independently, Yt = Xτ + t is a standard Brownian motion.

Defining the random time υ = sup{t ≤ 1: Xt = 0}, lemma 15 of the post on Brownian bridges says that X̃t = υ-1/2Xtυ is a Brownian bridge independent of υ.

Restricting to the event υ > T, we have σ < τ < υ. Writing T̃ = T/υ then σ̃ = σ/υ is the last time before T̃ at which X̃σ̃ = 0 and τ̃ = τ/υ is the first time after T̃ for which X̃τ̃ = 0. So, the following collections of random variables are independent:

- Bt = (τ - σ)-1/2|Xσ + t(τ - σ)|= (τ̃ - σ̃)-1/2|X̃σ̃ + t(τ̃ - σ̃)|, which is a Brownian excursion.

- The random times {σ̃, τ̃}.

- sgn(XT) = sgn(X̃T̃), which has the Rademacher distribution.

- The process σ-1/2Xtσ = σ̃-1/2Xtσ̃ over 0 ≤ t ≤ 1, which is a Brownian bridge.

- The process Ỹt = (1 - τ)-1/2Yt(1 - τ) which is a standard Brownian motion (by the scaling property).

The process in the last bullet point of the statement of the theorem (defined in terms of the Brownian bridge X̃) can be expressed as

where ρ = (υ - τ)/(1 - τ) is the final time before 1 for which Ỹρ = 0. Lemma 15 of the post on Brownian bridges says that this is a Brownian bridge.

We have proven the result in terms of the Brownian bridge X̃ and with the fixed time T replaced by T̃ = T/υ, where υ is a random variable taking values in independently of X̃. Conditioning on υ > 1 – ϵ for arbitrarily small ϵ > 0 gives the result. ⬜

The arcsine law for the distribution of the times σ, τ stated in lemma 5 carries across to the construction from Brownian bridges.

Lemma 7 Let X be a standard Brownian bridge, 0 < T < 1 be a fixed time and σ < T < τ be as defined by (1). Then,

for all times 0 ≤ s ≤ T ≤ t ≤ 1.

Proof: Using the fact that Yt = (1 + t)Xt/(1 + t) is a Brownian motion, vanishing at times t̃ = t/(1 - t) whenever X vanishes at t, lemma 5 gives

as required. ⬜

In passing, I note that the fact we can construct excursions from Brownian bridges, whose law is symmetric under time reversal means that the Brownian excursion distribution is also symmetric under time reversal.

Lemma 8 If Bt is a Brownian excursion then so is B1 - t.

Proof: By theorem 6, we can suppose that B is the normalized excursion of a Brownian bridge X about a time 0 < T < 1. Then, B1 - t is the normalized excursion of X1 - t about the time 1 – T, so is also a Brownian excursion. ⬜

As promised above, we can show that excursions can be expressed as Brownian bridges conditioned on being positive but, as this requires conditioning on a zero probability event, we need to be careful. We approximate by conditioning on B being close to a positive process and show that this converges in the limit. There are various different ways in which this approximation can be done. Here, I consider conditioning on the process being positive for all t in the unit interval except near the end-points. This turns out to be relatively straightforward, although other methods also work (such as conditioning on the minimum of the process being not too small, or on the process being nonnegative at finite sets of times).

By definition, the distribution of a process X under probability measures ℙn converges weakly to its law under ℙ if 𝔼ℙn[f(X)] → 𝔼ℙ[f(X)] for any bounded continuous function f of the paths of X. The topology of uniform convergence is used.

Theorem 9 Let {Xt}t ∈ [0, 1] be a Brownian bridge, and 0 < an < bn < 1 be reals with an → 0 and bn → 1. Then, the distribution of X conditioned on inft ∈ [an, bn]Xt > 0 converges weakly to that of a Brownian excursion as n → ∞.

Proof: Fixing a time 0 < T < 1, theorem 6 says that we can write

for Brownian bridges Y,Z, random times {σ, τ}, Rademacher variable U and Brownian excursion U. In particular, X converges uniformly to B as σ → 0 and τ → 1. Furthermore, each of these terms is independent.

So long as T is in the interval (an, bn) then the event

is equivalent to σ < an, τ > bn and U = 1. So, conditioned on Sn, as n goes to infinity, the distribution of X converges weakly to that of B which, by independence with Sn, is a Brownian excursion. ⬜

The Vervaat transformation

I now describe an interesting link between Brownian bridges and excursions. There is a straightforward conversion from Brownian bridges to excursions on a path-by-path basis.

If X is a Brownian bridge, let τ∗ be the time at which it attains its minimum so that Xt ≥ Xτ∗ for all times t in the unit interval. This minimum is achieved at a unique time, almost surely. We switch around the paths of X on the intervals [0, τ∗] and [τ∗, 1], and subtract the minimum value Xτ∗. The resulting sample path B has the Brownian excursion distribution. That is, Bt is equal to Xτ∗ + t – Xτ∗ when t ≤ 1 – τ∗ and Xτ∗ + t - 1 – Xτ∗ when t ≥ 1 – τ∗. Equivalently, Bt = Xs – Xτ∗ where s = τ∗ + t modulo 1.

Going in the opposite direction, starting with an excursion B, choose a time υ uniformly distributed on the unit interval independent from B. Switching around the paths of B on the intervals [0, υ] and [υ, 1], and subtracting Bυ, gives a Brownian bridge.

This mapping between bridges and excursions is demonstrated in figure 4 below. The top plot is of a Brownian bridge sample path. The path before the minimum is attained is shown in blue, and the part after is in green. Switching the order of these segments and shifting vertically to make it start from zero gives the Brownian excursion path in the bottom plot.

This map from Brownian bridge paths to excursions was discovered by Vervaat, and is known as the Vervaat transform.

As was noted in the posts on Brownian bridges and their Fourier expansions, for a continuous process Xt with time index t ranging over the unit interval, it can be useful to extend the time index to the entire real line. This is done by making it periodic so that Xt + n = Xt for integer n. The periodic extension can be written as X{t} where {t} is the fractional part of t. So long as X0 = X1, the extension will be continuous.

With this notation, the Vervaat transform says that if X is a Brownian bridge then X{τ∗ + t} – Xτ∗ is an excursion. That is, if we translate the time index of the bridge so that its minimum is at time 0, and translate its value so that it starts at 0, then we get a Brownian excursion. Immediate consequences of this result include the fact that the range maxtXt – mintXt of a Brownian bridge X has the same distribution as the maximum of a Brownian excursion. It is interesting to that when these distributions were calculated in 1976 by Chung (Excursions in Brownian motion) and Kennedy (The Distribution of the Maximum Brownian Excursion), the fact that they are the same was seen as a curiosity.Vervaat only published his paper in 1979 providing the probabilistic reason for this apparent coincidence.

I start by proving a discrete-time version of the transform, which is much more straightforward.

Lemma 10 Let {Xt}t ∈ [0, 1] be a Brownian bridge and n be a positive integer. Then, Xt almost surely has a unique minimum for t in the set {k/n: k = 0, 1, …, n – 1}. If we let τn be the time this minimum it attained, then the process X{τn + t} – Xτn has the same distribution as X has when conditioned on the event

Proof: From the Brownian bridge covariance structure, for times 0 ≤ s < t < 1, Xt – Xs is normal with nonzero variance, so is almost surely not equal to 0. Hence, for time restricted to a finite subset of [0, 1), the values of Xt are all distinct and so it has a unique minimum.

Now choose a random variable υ uniformly distributed on {0/n, 1/n, …, (n - 1)/n} independently of X. As previously shown, the process Yt = X{υ + t} – Xυ is also a Brownian bridge so has the same distribution as X. Conditioning on υ = τn does not affect the distribution of X, and gives Yt = X{τn + t} – Xτn.

On the other hand, υ = τn if and only if Yt > 0 for t in {1/n, 2/n, …, , (n - 1)/n}, so conditioning on this event gives the same distribution for Y as X has conditioned on Sn. ⬜

The idea is that, by letting n go to infinity, the law of X conditioned on Xk/n > 0 over 0 < k < n tends to a Brownian excursion and that τn tends to τ∗. Taking this limits will give the result. First, though, we need to show that continuous-time Brownian bridges have a unique minimum.

Lemma 11 If X is a Brownian bridge then, with probability one, there is a unique time 0 ≤ τ∗ < 1 at which Xτ∗ = mintXt.

Proof: We can reduce to the case where X is standard Brownian motion. Letting σ = supt ≤ 1Xt, then lemma 15 of the post on Brownian bridges says that Bt = σ-1/2Xtσ is a Brownian bridge independently of 1[σ, 1]X. If B did not have a unique minimum, then the same would be true of X whenever X1 > 0, which has probability 1/2. So, it is sufficient to show that Brownian motion X has a unique minimum.

If X did not have a unique minimum, then for some rational time 0 < t < 1 we would have mins ≤ tXs = mins ≥ tXs with positive probability. That is, the independent Brownian motions Xt – Xt - s and Xt – Xt + s (with time index s ≥ 0) would have the same maximum. However, the maxima are independent with a continuous distribution, so are almost surely distinct. ⬜

Theorem 12 (Vervaat) Let {Xt}t ∈ [0, 1] be a Brownian bridge and τ∗ ∈ [0, 1) be the time at which its minimum is achieved, Then, τ∗ is uniformly distributed and, independently,

is a Brownian excursion over 0 ≤ t ≤ 1.

Proof: Use the notation of lemma 10. As X has a unique minimum and is continuous, then τn → τ As n goes to infinity. So, the processes Bnt ≡ X{τn + t} – Xτn converge uniformly to Bt.

On the other hand, the law of Bnt is the same as Xt conditioned on Sn. Fixing a time 0 < T < 1, by theorem 4 we can write

over σ ≤ t ≤ τ, for random times σ < T < τ, a Rademacher random variable U and, independently, a Brownian excursion Y. As the events Sn only depend on the signs of X, it does not depend on Y, so is independent of Y. Therefore, conditioned on Sn, Y is still a Brownian excursion.

Next, for any 0 < a < T < b < 1 then, as Bn tends uniformly to B, for large enough n it will be strictly positive on the interval [a, b]. So,

as . So, conditioned on Sn, σ → 0, τ → 1, U → 1 in law. Hence, X tends in law to the Brownian excursion Y. As it also tends to B, this shows that B is a Brownian excursion.

It only remains to show that τ∗ is uniformly distributed independently of B. To this end, introduce a random variable υ uniformly distributed on [0, 1) independently of X, and set X̃t = X{υ + t} – Xυ, which is a Brownian bridge. Its minimum occurs at time τ̃∗ = {τ∗ – υ} and X̃{τ̃∗ + t} – X̃τ̃∗ = Bt. Hence, τ̃∗ is uniformly distributed independently of B but, by construction, (B, τ̃∗) has the same distribution as (B, τ∗). ⬜

We can also go in the opposite direction and construct Brownian bridges from the sample paths of excursions.

Theorem 13 Let {Bt}t ∈ [0, 1] be a Brownian excursion and, independently, υ be uniformly distributed on [0, 1). Then, Xt = B{υ + t} – Bτ is a standard Brownian bridge.

Proof: Start by choosing X to be a Brownian bridge. By theorem 12 it has a unique minimum at time τ∗ ∈ [0, 1), which is uniformly distributed and independent of the excursion Bt = X{τ∗ + t} – Xτ∗.

Rearranging, Xt = B{υ + t} – Bυ is a standard Brownian bridge where υ = 1 – τ∗ is uniformly distributed on (0, 1) independently of B. ⬜

The excursion distribution

We can show that Brownian excursions are Markov processes and compute their transition probabilities. It turns out these are described by noncentral chi-squared distributions. Recall that χ2n(μ) is the distribution of the sum of squares of independent normally distributed random variables,

where Zi have unit variances and means μi satisfying Σiμi2 = μ. Alternatively, the distribution of a χ2n(μ) random variable Z is described by the moment generating function,

for λ ≥ 0. This also serves as a valid definition for noninteger n, although that will not be required here. In fact, we only require chi-squared distributions for order n = 3. Start by looking at the distribution of a Brownian motion conditioned on when it hits zero.

Lemma 14 Let X be Brownian motion with initial value X0 > 0 and

be the first time that it hits 0. Then, for any time t > 0, conditioned on the values of X0 and τ > t,

has the χ23(μ) distribution where

Proof: Throughout, I will condition on the value of X0 > 0, so this can be taken to be constant. Fixing a time T > t, we start by computing the distribution of Xt on the event τ > T using the expression

This is the reflection principle. By symmetry in reflecting X about 0 after time τ, the expectation on the right hand side restricted to τ ≤ T is zero and, restricted to τ > T, it is equal to the left hand side.

As XT – Xt is a centered normal with variance T – t independently of Xt, we obtain

where Φ is the cumulative normal distribution function Φ(x) = ℙ(N < x) for standard normal N. As this has derivative e–x2/2/√2π, the expression above is continuously differentiable in T, so regular continuous conditional probabilities exist with respect to τ. Taking the derivative,

I am using ∼ to mean that the two sides are equal up to a nonzero scaling factor depending only on t, T, X0. This avoids a bit of mess since we do not need to keep of these scaling factors which, in any case, are uniquely determined by the fact that the total probability must sum to one.

We compute the moment generating function of Y = τXt2/(t(τ - t)) by setting f(x) = e–λTx/(t(T - t)),

where a = 2λT/(t(T - t)) + 1/(T - t). We are effectively done now. We know that Xt is normal with mean X0 and variance t, so have everything necessary to compute the expectation on the right hand side. We could just plug in the probability density of Xt and perform the integral. To avoid some of the messy details, we can use some of the ideas from the post on the normal distribution. By equation (12) from that post,

As Xt has mean X0, we can differentiate wrt this, so that Xt has derivative equal to 1 giving,

with μ = (T - t)X02/(tT), where I used 1 + at ∼ 1 + 2λ. As this is the moment generating function of the χ23(μ) distribution, the proof is complete. ⬜

Applying the result above to Brownian excursions gives the transition probabilities.

Theorem 15 A continuous nonnegative process {Bt}t ∈ [0, 1] with natural filtration ℱ· is a Brownian excursion if and only if B0 = 0 and, for times 0 ≤ s < t < 1 then, conditioned on ℱs,

has the χ23(μ) distribution where

Proof: We just need to prove that an excursion B has these conditional distributions since, in the opposite direction, Markov processes are uniquely determined by their initial condition and transition function.

It is sufficient to consider times 0 < s < t < 1, since the case with s = 0 following by taking the limit as s tends to 0. Using theorem 4, we can consider a Brownian excursion given by (2),

for standard Brownian motion X, with σ being the final time before 1 at which Xσ = 0 and τ being the first time after 1 for which Xτ = 1. We condition on σ = 1 – s and τ = 1 + σ, which are independent of B, and on the values of Xu over u ≤ 1. Then, X̃u = X1 + u is a Brownian motion conditioned on hitting 0 at time τ̃ = 1 – s and Bt = X̃t̃ where t̃ = t – s. Then,

which, by lemma 14, has the χ23(μ) distribution with

⬜

There is already a standard class of Markov processes described by noncentral chi-squared distributions. Specifically, Bessel processes. A nonnegative continuous Markov process X is a BES2n process if for all times s < t then, conditioned on Xs, Xt/(t - s) has the χ2n(Xs/(t - s)) distribution. This is referred to as a squared Bessel process. A (non-squared) Bessel process is the square root of a BES2n process, which I denote by BESn. Then, squared and non-squared Bessel processes with initial value a will be denoted by BES2n(a) and BESn(a) respectively. A word of warning on the notation: where I use BESn(a), some authors use BESa(n). There does not seem to be a universally agreed standard here so, when comparing results in these notes with other sources, the meaning of the notation should be checked.

Brownian excursion sample paths can be constructed as a simple transform of the paths of a Bessel process of order 3.

Theorem 16 A continuous process {Bt}t ∈ [0, 1] is a Brownian excursion if and only if (1 + t)Bt/(1 + t) is a BES3(0) process. Equivalently, Bt = (1 - t)Xt/(1 - t) for a BES3(0) process Y.

Proof: The two conditions are clearly equivalent, since (1 + t)Bt/(1 + t) = Xt if and only if Bt = (1 - t)Xt/(1 - t). Supposing that X is a BES3(0), it needs to be shown that B is a Brownian excursion.

Let ℱ· and 𝒢· be the natural filtrations of B and X respectively, so that ℱt = 𝒢t/(1 - t).

For times 0 ≤ s < t < 1, set s′= s/(1 - s) and t′= t/(1 - t). By definition, conditional on , X2t′/(t′-s′) has the χ23(μ) distribution with μ = X2s′/(t′-s′). A little rearrangement gives

From theorem 15, B is a Brownian excursion. ⬜

Brownian excursions can be identified as Bessel bridges, which are Bessel processes started from zero and conditioned on hitting zero at time 1. Alternatively, they can be constructed as the vector norm

of an n-dimensional Brownian bridge X. That is, X = (X1, X2, …, Xn) for independent Brownian bridges X1, …, Xn. To see this, consider an n-dimensional Brownian motion X, so its vector norm B = ‖X‖ is a process. Conditioning on B1 being small is equivalent to conditioning on each individual Xi1 being small so, in the limit, expresses B conditioned on B1 = 0 as the vector norm of an n-dimensional Brownian bridge.

Theorem 17 A Bessel bridge of order 3 is a Brownian excursion. That is, if X = (X1, X2, X3) is a 3-dimensional Brownian bridge, then ‖X‖ is a Brownian excursion.

Proof: As Xi are independent Brownian bridges, t ↦ (1 + t)Xit/(1 + t) are independent Brownian motions and, hence, (1 + t)‖Xt/(1 + t)‖ is a BES3(0) process. Then theorem 16 says that ‖X‖ is a Brownian excursion. ⬜

The excursion SDE

The final method I use to represent Brownian excursions is via a stochastic differential equation (SDE). Recall the Brownian bridge SDE which included the negative drift term –Bt/(1 - t) pushing it to zero at time 1. We expect the SDE for a Brownian excursion B to have a similar drift term but, as it is nonnegative, there should also be a positive drift term which goes to infinity as B goes to zero to push it away. In fact, we show that it satisfies the SDE

| (3) |

Theorem 18 A nonnegative continuous process

is a Brownian excursion if and only if

(almost surely) and it solves the SDE (3) over 0 < t < 1 for a standard Brownian motion

.

Proof: We are spoilt for choice on how to solve this. We could use the transition probabilities directly (as was done for Brownian bridges), or use the identification with Bessel bridges. Instead, I will use representation in terms of Bessel processes. Recall the SDE for a BES2n process Y

for Brownian motion W. Then, for a non-squared Bessel process Y we write X = Y2, and by an application of Ito’s lemma with n = 3,

| (4) |

Consider the time-changed process Bt = (1 - t)Xt/(1 - t). Note that Wt/(1 - t) is centered Gaussian with independent increments and variance t/(1 - t). As this has derivative (1 - t)-2 we can write dWt/(1 - t) = (1 - t)-1dW̃t for a standard Brownian motion W̃. Then, SDE (4) is equivalent to B satisfying

which is SDE (3) with respect to a different Brownian motion. Hence, B satisfies (3) if and only if X satisfies (4) for some other Brownian motion or, equivalently, X is a BES3 process. So, the result follows from theorem 16. ⬜