One of the common themes throughout the theory of continuous-time stochastic processes, is the importance of choosing good versions of processes. Specifying the finite distributions of a process is not sufficient to determine its sample paths so, if a continuous modification exists, then it makes sense to work with that. A relatively straightforward criterion ensuring the existence of a continuous version is provided by Kolmogorov’s continuity theorem.

For any positive real number , a map

between metric spaces E and F is said to be

-Hölder continuous if there exists a positive constant C satisfying

for all . The smallest value of C satisfying this inequality is known as the

-Hölder coefficient of

. Hölder continuous functions are always continuous and, at least on bounded spaces, is a stronger property for larger values of the coefficient

. So, if E is a bounded metric space and

, then every

-Hölder continuous map from E is also

-Hölder continuous. In particular, 1-Hölder and Lipschitz continuity are equivalent.

Kolmogorov’s theorem gives simple conditions on the pairwise distributions of a process which guarantee the existence of a continuous modification but, also, states that the sample paths are almost surely locally Hölder continuous. That is, they are almost surely Hölder continuous on every bounded interval. To start with, we look at real-valued processes. Throughout this post, we work with repect to a probability space

. There is no need to assume the existence of any filtration, since they play no part in the results here

Theorem 1 (Kolmogorov) Let

be a real-valued stochastic process such that there exists positive constants

satisfying

for all

. Then, X has a continuous modification which, with probability one, is locally

-Hölder continuous for all

.

As an example, consider a standard Brownian motion X. In this case, is a centred normal variable of variance

. Hence,

for a standard normal N. Theorem 1 can be applied so long as we take . In that case,

and we see that Brownian motion is locally

-Hölder continuous for all

. By choosing

as large as we like, this demonstrates that Brownian motion is locally

-Hölder continuous for all

. In the other direction, it is not hard to show that it cannot be 1/2-Hölder continuous on any nontrivial interval.

More generally, theorem 1 can be applied to fractional Brownian motion. These are centred Gaussian processes whose finite distributions can be defined by the pairwise covariances. I do not show that these finite distributions are well-defined here (i.e., that the covariance matrix is positive semi-definite). The point is that once we have constructed the finite distributions, Kolmogorov’s theorem ensures the existence of a continuous modification.

Example 1 Fractional Brownian motion,

, of Hurst parameter H (strictly between 0 and 1), is a centred Gaussian process such that

has standard deviation

for all

.

This has a continuous modification which, with probability one, is locally

-Hölder continuous for all

.

As in the example of standard Brownian motion above, which is actually just fractional Brownian motion with Hurst parameter 1/2, we can compute

and, so, theorem 1 applies with and

-Hölder continuity holds for all

. Again, letting

go to infinity, shows that it holds for all

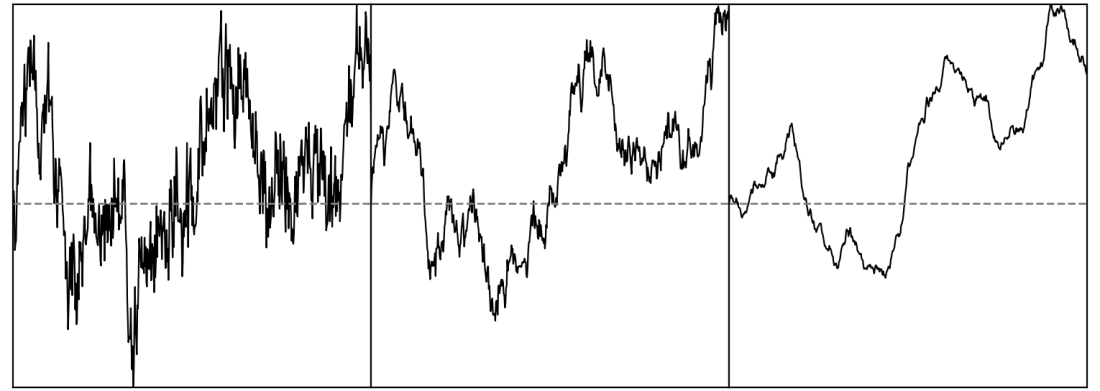

, as claimed. In the reverse direction, it is not difficult to show that the fractional Brownian motion is not H-Hölder continuous. So, with increasing value of H, the sample paths of fractional brownian motion become smoother, in a sense. This can be seen visually for the paths shown in figure 1 above.

The continuity theorem can be generalised in a couple of ways. Firstly, the process need not be real-valued but, rather, can take values in a complete metric space. Secondly, the (time) index need not be restricted to be the nonnegative reals, but can be allowed to take values in any subset of .

Theorem 2 Let E be a separable and complete metric space,

, and

be a collection of E-valued random variables. If

are positive constants satisfying

(1) for all

, then

has a continuous modification. Furthermore, with probability one, this modification is almost surely

-Hölder continuous on all bounded sets for all

.

Theorem 1 is just the special case of this result where ,

and

. The proof is given further down. The requirement for the metric space to be separable, so that it has a countable dense subset, is only really to ensure that

are measurable random variables. I was also a bit unclear in the statement of inequality (1) as to the meaning of the norm

on

. We could, for example, use the

-norm for any

, defined by

. Alternatively, the

norm given by

can be used. The fact that these are all equivalent,

means that it does not matter which is used. The only difference is in the value of the arbitrary constant C, and does not affect whether the condition of theorem 2 is satisfied.

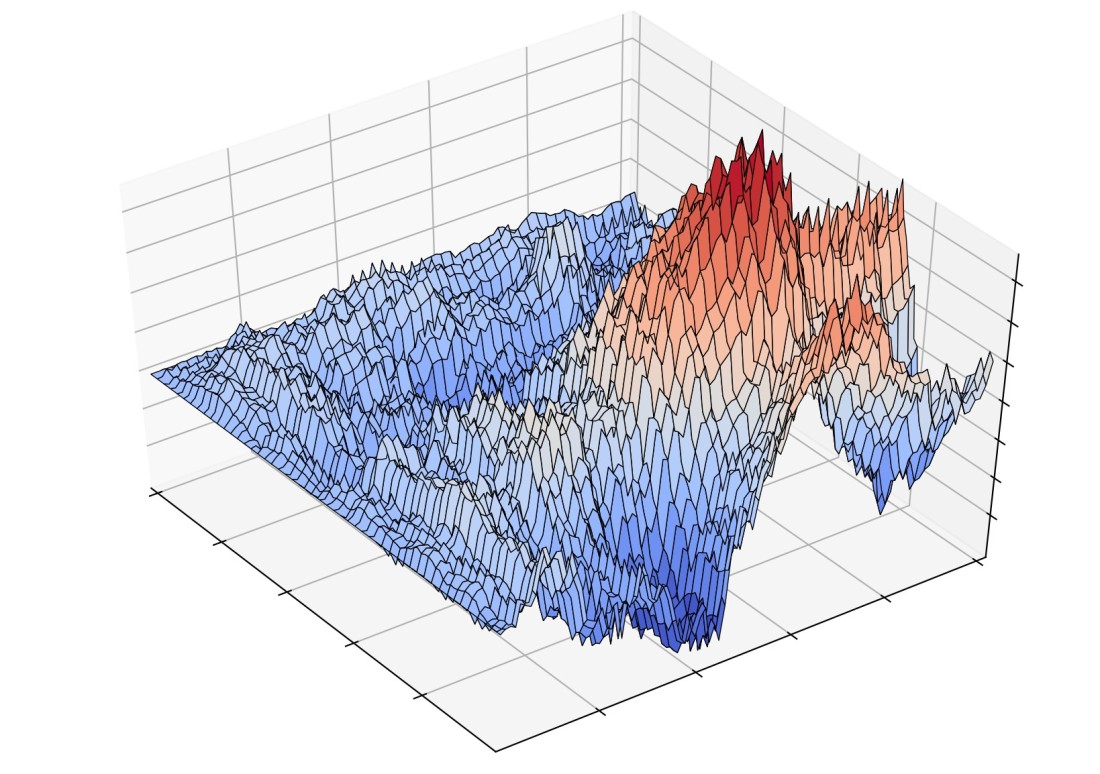

As an example application of theorem 2, we can construct generalisations of Brownian motion varying over a multidimensional index set. The 2-dimensional case is called a Brownian sheet, and a sample is plotted in figure 2 above. This can represent a continuous random path, which itself varies randomly over time. Such processes may be used to build models of interest rates where, at any moment in time, we have an entire yield curve representing the interest rates for all maturities, and these also vary randomly over time.

Lemma 3 For each positive integer d, there exists a zero mean Gaussian stochastic process

with covariance

for all

. This has a continuous modification, which is locally

-Hölder continuous for all

.

Proof: I make use of the standard result that, for any (real) inner product space V, we can define a joint normal collection of random variables , over

, with zero mean and such that

for all

. In fact, joint normal variables can be defined for any positive semidefinite covariance matrix, which applies here since,

Take V to be with

being the Lebesgue measure. Define,

for all , with

denoting the set of all

with

(

). We then have,

as required.

It remains to show that W has a modification with the stated Hölder continuity and, for this, it is sufficient to prove the result for index t restricted to bounded sets of the form , as the full result will follow by letting T increase to infinity. For

,

Hence, for any fixed , there exists a constant C satisfying

Theorem 2 can be applied so long as . In this case, we take

and see that the continuous modification is

-Hölder continuous for all

. Letting

increase to infinity gives the result. ⬜

Proof of the Continuity Theorem

To show that a process is Hölder continuous, we need to bound

In particular, this should be bounded of the form for small

. This depends on the joint distribution of

as x and y vary so, if all that we are given to work with is the inequality (1) for the individual distributions, then it is not easy to obtain a good bound. One, rather extreme, upper bound on a set of nonnegative real numbers is given by their sum. This does at least allow us to make use of the linearity of expectation,

In cases of interest, the set S will be infinite, and the sum on the right hand side will contain infinitely many terms, so will diverge. As it is, this is not much help. However, if we restrict x and y to lie on a regular grid whose spacing is of order , this idea does lead to useful bounds. Then, combining with the triangle inequality to split

as a finite sum of terms like

for pairs

lying on such grids, we can obtain reasonable bounds for more general points x and y. Choosing grids of spacing

for integer n works well. This leads to considering the restriction of

to dyadic points

where each

is of the form

for integer a. As I stated theorem 2 in a rather general form, where S can be any subset of

not necessarily including the dyadic points, this adds a slight complication. However, it is easily resolved by approximating the dyadic grid points by elements of S instead, and we obtain a proof of Hölder continuity on a dense set of points.

Lemma 4 Let E be a separable complete metric space, S be a subset of the unit d-cube

, and

be a collection of E-valued random variables satisfying (1).

Then, there exists a countable dense subset

such that, with probability one,

is

-Hölder continuous on

for all

. In particular, the

-Hölder coefficient

satisfies

.

Proof: For each nonnegative integer n, we let denote the set of dyadic numbers of the form

for integer

. Also, let

be the dyadic interval

.

Moving from 1 dimension to d dimensions, we let be the collection of

such that each

is in

, and write

We note that, for each n, these sets form a partition of . Let

denote the set of

such that

has nonempty intersection with S and, for any such x, choose

. Then, define the finite subset of S,

Note that, whatever the choice of , it will lie in

for some

. Hence,

can be chosen to equal

and, by doing this, we ensure that

. We take

. This is easily seen to be dense in S. Consider

, which will be contained in

for some

. Then

is in

and

. As n can be as large as we like, this shows that

is dense.

Consider the random variables,

We note that there are at most possible values of

. Then, there are at most

possible values for y in

and, then,

. Similarly, if

and

, then

is equal to

or 0, for each

. Hence, there are at most

possible values for y and, then,

. We suppose that inequality (1) holds using the

norm, so that,

Consequently, for , if we set

and

then,

By the sums of geometric series, these have bounded sum over n. For distinct , choose integer

with

Also, choose large enough that x and y are both in

. For each integer

choose

such that

and

. By construction,

and

, and similarly for y. Furthermore,

. Hence, by the triangle inequality,

Hence, the -Hölder coefficient on

satisfies,

Raising to the power of gives

which has finite expectation, as required. ⬜

This is the hard part of the proof over with now. Constructing the continuous modification over bounded index sets is straightforward.

Lemma 5 Let E be a separable and complete metric space,

be bounded, and

be a collection of E-valued random variables satisfying inequality (1). Then

has a continuous modification. Furthermore, with probability one, this modification is

-Hölder continuous for all

, and the Hölder coefficient satisfies

.

Proof: By scaling, if necessary, we can suppose without loss of generality that S is contained in the unit d-cube . Then, applying lemma 4, there exists a countable dense subset

on which, for all

, the

-Hölder coefficient

satisfies

. We can let

be the event on which

for all

and, then, choosing any fixed

, define the modification,

By uniform continuity, the limit over y exists and defines a -Hölder continuous map on S with coefficient

. It only remains to show that this is indeed a modification. So, choosing

and a sequence

tending to

, Fatou’s lemma gives,

Hence and, so,

almost surely. ⬜

Completing the proof by extending to unbounded index sets is now almost a formality.

Proof of Theorem 2: For each positive integer n, let be the set of

with

. This is an increasing sequence of bounded subsets of S, which eventually contains any given bounded subset. Lemma 5 provides continuous modifications

which, furthermore, are

-Hölder continuous for each

. It is standard that, up to probability one, there can be at most one continuous modification on each set. To be precise, choosing countable dense subsets

, the set

of all

for which

over

is measurable with probability one. Furthermore, by continuity,

and

agree on all of

, for all

. Hence,

has probability one and, fixing any

, the required global modification is given by

This is clearly -Hölder continuous on any bounded subset of S, since it either agrees with

for sufficiently large n or is constant. ⬜

when we apply Kolmogorov continuity thm, do we require process X_t to be separable?

I’m not sure what you mean by X_t is separable. We do require the metric space in which X takes values to be separable. This is only so that d(X_s,X_t) is a measurable random variable. Even if the metric space is not separable, but you know that these random variables are measurable, then the theorem still works. Even if it is not measurable, so that the probabilities are not even defined, the theorem will still work as long as you interpret the probabilities as outer measures.

Great! Thank you for the explanation!

Thanks a lot for your post. One can easily show that Theorem 1 is sharp. For example, define a real-valued stochastic process as X(t) = I_{U =< t } for all t \in [0,1], where "I" is the indicator function and U is a uniformly distributed random variable on the range [0,1]. Then we have E [ |X(t)-X(s)|^\alpha ] = |t-s| for all \alpha but obviously X has no continuous sample paths. Do you know an example that shows Theorem 2 is also sharp for example for the case d = 2? Thank you in advance!