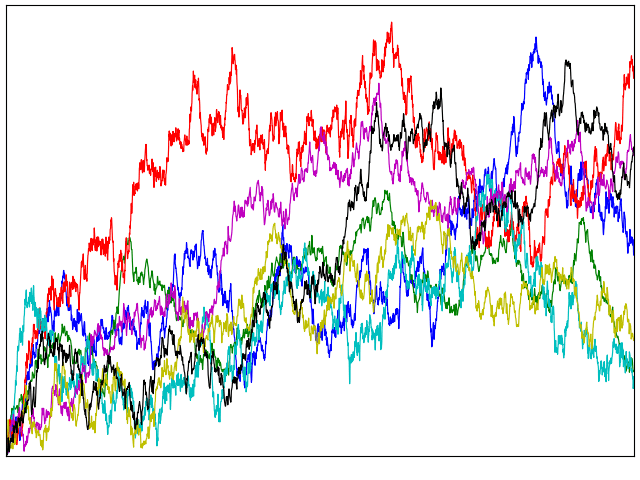

If X is standard Brownian motion, what is the distribution of its absolute maximum |X|t∗ = sups ≤ t|Xs| over a time interval [0, t]? Previously, I looked at how the reflection principle can be used to determine that the maximum Xt∗ = sups ≤ tXs has the same distribution as |Xt|. This is not the same thing as the maximum of the absolute value though, which is a more difficult quantity to describe. As a first step, |X|t∗ is clearly at least as large as Xt∗ from which it follows that it stochastically dominates |Xt|.

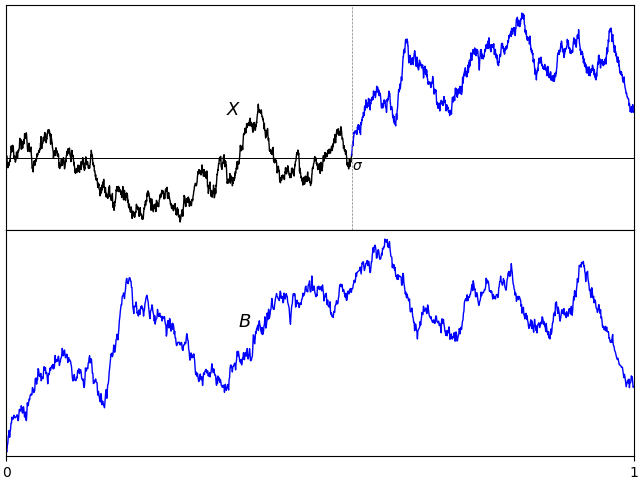

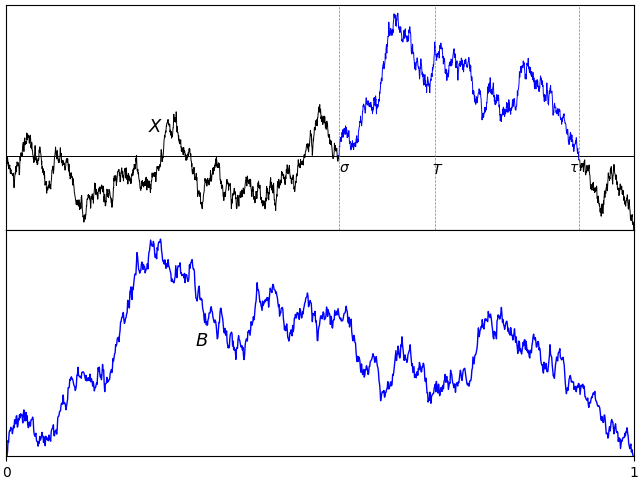

I would like to go further and precisely describe the distribution of |X|t∗. What is the probability that it exceeds a fixed positive level a? For this to occur, the suprema of both X and –X must exceed a. Denoting the minimum and maximum by

then |X|t∗ is the maximum of XtM and –Xtm. I have switched notation a little here, and am using XM to denote what was previously written as X∗. This is just to use similar notation for both the minimum and maximum. Using inclusion-exclusion, the probability that the absolute maximum is greater than a level a is,

As XtM has the same distribution as |Xt| and, by symmetry, so does –Xm, we obtain

This hasn’t really answered the question. All we have done is to re-express the probability in terms of both the minimum and maximum being beyond a level. For large values of a it does, however, give a good approximation. The probability of the Brownian motion reaching a large positive value a and then dropping to the large negative value –a will be vanishingly small, so the final term in the identity above can be neglected. This gives an asymptotic approximation as a tends to infinity,

| (1) |

The last expression here is just using the fact that Xt is centered Gaussian with variance t and applying a standard approximation for the cumulative normal distribution function.

For small values of a, approximation (1) does not work well at all. We know that the left-hand-side should tend to 1, whereas 4ℙ(Xt > a) will tend to 2, and the final expression diverges. In fact, it can be shown that

| (2) |

as a → 0. I gave a direct proof in this math.stackexchange answer. In this post, I will look at how we can compute joint distributions of the minimum, maximum and terminal value of Brownian motion, from which limits such as (2) will follow. Continue reading “The Minimum and Maximum of Brownian motion”